Elon Musk has usually been at the forefront of technological innovation and futuristic business models. So it might come as a surprise to some that he is frightened by Silicon Valley’s accelerated adoption of artificial intelligence (AI) and robotics.

As the argument rages on, you have to wonder if he actually has a point. Is there a critical need for AI and robotics standards that are supported by a kill switch?

According to Musk (the man behind PayPal, Tesla, and SpaceX), AI that has been developed to the point of being centralized is highly likely to be unstoppable. He also thinks that AI is developing much faster than people realize and comes with the real potential of destroying the human race.

This idea echoes statements he made a few years ago that suggested that human integration with AI is inevitable, so a human-artificial intelligence symbiote would be the optimal solution to enable more control over the intelligence.

According to theoretical physicist Stephen Hawking, we should all fear runaway technological advancement because it will create a situation where intelligent programs and robots can quickly redesign themselves in an ever-evolving upgrade that can’t be matched by human beings.

Furthermore, he believes that when AI surpasses human intelligence, we will be faced with dire consequences. These fears are also shared by the experts at the Centre for the Study of Existential Risk (CSER) and the Leverhulme Centre for the Future of Intelligence, who listed AI as their biggest concern for the future.

What’s more, they also stated that autonomous AI could achieve human-level intelligence by 2075. As a result, these intelligent robots, if left ungoverned, could potentially initiate a nuclear winter and annihilate the entire human race.

However, Demis Hassabis, co-founder of the London laboratory DeepMind, begs to differ. While AI has been experiencing a period of rapid acceleration, it’s still not the all-powerful self-evolving software that Musk fears.

The AI that is available today powers Google’s search engine, basic digital assistants like Apple’s Siri, and photo-tagging technology utilized by Facebook. But it’s no secret that the end goal is to eventually build a highly adaptable self-learning AI that can mimic human learning.

As a result, it’s safe to conclude that both Hassabis and Musk are right. There is a very real danger in leaving AI advances unchecked, but approaching the subject with apocalyptic scenarios is probably futile (and more of a distraction).

However, even Hassabis’s partner and DeepMind co-founder, Shane Legg, believes that technology will contribute to human extinction.

While it’s true that AI offers huge benefits in the short-term, it’s also important to engage in this discussion and encourage a dialogue that can lead to robotics standards.

This means setting up protocols to regulate AI and robots now, rather than when they have achieved superior intelligence. So it might be a good idea to explore the potential of incorporating kill switches in AI, but a global governing body will also have to answer some ethical questions.

For example, should AI be afforded rights just like humans? What’s more, when AI causes harm, who should be held responsible?

Science-fiction writer Isaac Asimov contemplated these events in the middle of the 20th century. He suggested the implementation of laws for robots to ensure the protection of the human race.

His first law focused on making sure that robots didn’t allow any harm to come to human beings through action or inaction. He also wanted robots to always obey orders given by a human as long as the command didn’t violate the first law.

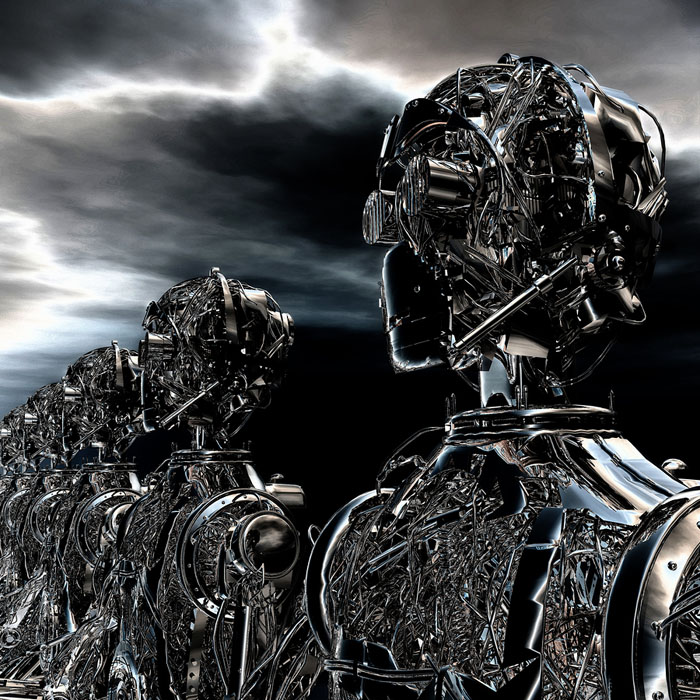

He further suggested that a law should be implemented to ensure that robots protected their own existence as long as it didn’t come into conflict with the first two laws. While these fictional laws may conjure memories of Arnold Schwarzenegger and the first Terminator film, they’re probably a good place to start.

All the noise Musk has been making regarding his AI fears hasn’t gone unnoticed. In fact, many organizations are making their own efforts to systematically regulate AI. Amazon, Facebook, Google, IBM, Microsoft, and others have grouped together as the Partnership on Artificial Intelligence to Benefit People and Society. The Orwellian-sounding group hopes to develop AI and robotics best practices while operating an open platform to encourage extensive discussions about the subject. Furthermore, the U.S. government released its guidance for autonomous vehicles last year.

Two of the oldest scientific organizations in the world, the U.K.’s Royal Society and the British Academy, also published a report that called for the creation of a national body to govern the evolution of AI. It’s safe to conclude that while many may not have been as loud as Musk, these growing concerns are shared across the world.

However, many disagreements continue, so we’re still far from any agreement on how this will be implemented. There is also no consensus on what these robotics standards will look like, so we will have to wait and see how it all plays out.

One thing is for sure: this discussion is taking place at the right time, and many of the planet’s great minds have been engaged. This is a step in the right direction, hopefully leading to kill switches and best practices moving forward.

Robots, Artificial Intelligence and the Ethical Questions Raised

Robots, Artificial Intelligence and the Ethical Questions Raised Eat this! Robotics and AI in your Stomach

Eat this! Robotics and AI in your Stomach Are Robots the Future of Farming?

Are Robots the Future of Farming? SewBot Is Revolutionizing the Clothing Manufacturing Industry

SewBot Is Revolutionizing the Clothing Manufacturing Industry Advances in Personal Assistant Robots With Contextual Language Processing

Advances in Personal Assistant Robots With Contextual Language Processing Robotics Meet Healthcare: Tackling the Zika Epidemic

Robotics Meet Healthcare: Tackling the Zika Epidemic